Network as a Service (NaaS) enables a flexible and responsive strategy to enable the edge, in all its forms. When organizations view their assets and resources, they all involve what communications services providers consider the edge. But from a client perspective, the edge IS the company. Located at the edge are the organization’s sites, assets, people, applications, productivity needs, and security challenges. Inter-networking these enables the business’s commercial activities and determines the success of the […]

Although blockchain may have originally been tailored for the process of financial transactions, its sequential recording of events makes it a dynamic and flexible technology that can be successfully applied to several business areas With the increasing threat of hacking, data loss and security breaches, blockchain is in many ways setting a whole new standard for all types of transactions and business interactions. Thanks to the level of security it can offer, blockchain allows organizations […]

The Silicon Valley venture capital firm, Bessemer Venture Partners, meets with over a thousand cloud companies each year and keeps close tabs on its dozens of investments in the cloud market. Just to round things out, Bessemer also researches 78 cloud companies that have already gone public, keeping tabs on their performance so as to better advise their still-private investments. All of this data comes together in the firm’s annual State of the Cloud report. Here […]

Both DevOps and cloud computing have been hyped as essential to maintaining competitiveness and undertaking digital transformation in today’s organisation. While DevOps is about improving business processes and applications, cloud is all about the underlying services and technology. So how can businesses make the most of these? How cloud DevOps differs from other DevOps When we talk about DevOps in terms of ideals, it doesn’t take long to mention “everything as code” or “immutable infrastructure”; […]

The benefits to the mobile industry of virtualization are clear, with a range of major advantages including cost reduction, scalability and the ability to offer a broad range of new services. Wireless Network Functions Virtualization (NFV) is the evolving initiative to deploy the benefits of virtualization already sweeping the IT infrastructure world into wireless networks of all types. In general, Network Functions Virtualization (NFV) (also known as virtual network function (VNF)) offers a new way to design, […]

HPE has identified the five key capabilities necessary for securing a hybrid cloud environment: Data-centric security: Protecting data is the core of security and compliance controls. Data needs to be secured at rest, in motion and while in use. It should maintain an index with a searchable data format after it is protected and the encryption should be multi-layered. Dynamic infrastructure hardening: Just like on-premises machines, the cloud infrastructure needs to be updated and hardened […]

New survey data reveal that some of the initial concerns about cloud and on-premises data and app integration, and unifying the user experience, continue to be significant challenges. Yet IT governance and the maturing of managing technology have led to a surprising agreement between the data center jockeys and their business customers. A Harvard Business Review Analytic Services poll in the fall of 2015, about cloud implementation experiences, yielded 341 respondents from around the world […]

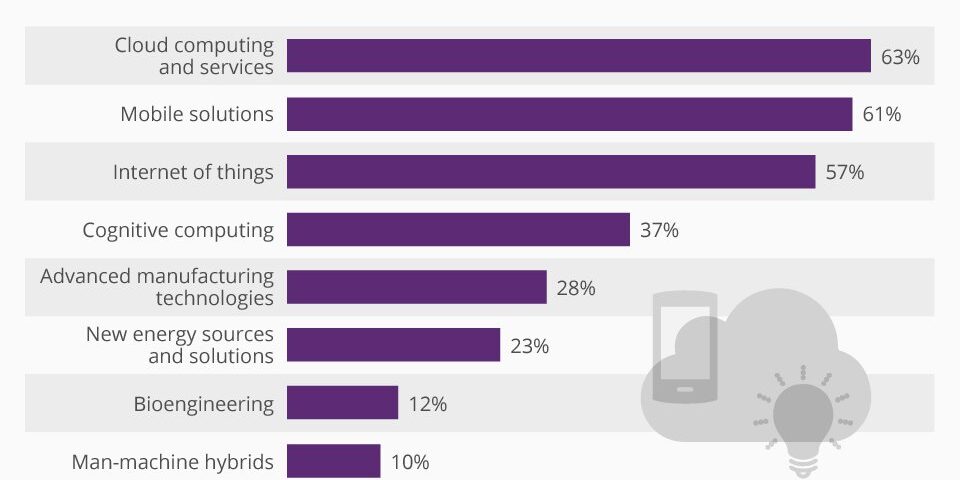

Cloud computing has been named the technology most likely to shape the future, according to an IBM survey. The poll questioned C-level executives from 70 countries on which technologies they thought would be particularly important in the next three to five years. Of all the respondents, 63% said cloud computing and related services would have the biggest impact by 2020. Next on the list was mobile solutions – named by more than three in five CxOs. The top three […]

How can overloads on the Internet be prevented? Cloud computing means that more server space can be rented from large computing resources. Ahmed Hassan has developed algorithms for automatic addition and removal of server resources for a web service based on demand. The study was performed at Umeå University in Sweden. When Michael Jackson passed in 2009, he almost took the Internet with him. CNN, Google News, TMZ, Twitter, Wikipedia, and most other news websites […]

Ten main reasons why cloud technology is taking center stage in call centers: Flexibility of OPEX over CAPEX. The shift from upfront capital costs to ongoing pay-as-you-go operational costs means that cloud-based solutions offer contact center managers a way to deploy new technology and scale up or down in a cost-effective way. For outsourcers and telemarketers who may have call volumes that vary dramatically depending on campaign levels, solutions with a flexible pay per usage […]

As a result of two rulings, each effective July 1, 2015, the city of Chicago will attempt to tax the “Cloud” more directly and comprehensibly than any other U.S. jurisdiction. On June 9, 2015, the Chicago Department of Finance (the “Department”) issued rulings regarding (1) the application of the city’s Personal Property Lease Transaction Tax (the “Lease Transaction Tax”) to nonpossessory computer leases and (2) the application of the city’s Amusement Tax (the “Amusement Tax”) […]

Cloud storage is a solution that users are driving IT organizations to use whether we want to or not. Just ask a sales person what they use. They will tell you how great it is and how they use it. As IT organizations, we need to take notice and understand the impact to our process and the effect to the data stored outside of our organization. Here are five things commonly misunderstood about cloud storage: […]

According to a new report by cloud security company CipherCloud, compliance is the single biggest concern for large organizations looking at cloud adoption. The company surveyed its 100-plus large global customers and found that, for 64 percent, compliance was their top cloud security obstacle, followed by unprotected data for 32 percent. Of those concerned most about compliance, 58 percent said that cloud services violated data protection laws in their country, 31 percent said they violated […]

A recent survey by communications and enterprise IT service provider Tata Communications found that enterprise organizations with more than 500 employees saw tangible benefits to their cloud computing efforts. The survey results included 85 percent of the respondents saying that the cloud had lived up to the industry hype, while 23 percent said it had exceeded their expectations. According to the global survey, 83 percent of the enterprises felt they had experienced benefits they didn’t […]

As bank executives continue to debate, hesitate and worry over the security issues related to using applications that connect to the cloud, their employees are using cloud-based apps by the hundreds — often without banks’ knowledge. The average bank had 844 cloud services in use throughout its network in the third quarter of this year, according to an analysis conducted by Skyhigh Networks, far higher than bank IT departments estimated. “If you did a survey […]

Cloud computing is no longer just the realm of experimenters. Research suggests about 86 per cent of Australian companies now use a cloud service of some type, while the rest are more than likely to deploy a service in the coming years. Companies are finding cloud services provide the agility, flexibility and scalability they need to reduce the cost of doing business, or even completely changing the way they do it. Although cloud services are […]

Technical things go wrong. So what should businesses think about to ensure reliable and consistent operations with an added layer of complexity? The first step is recognizing that things will go wrong. Whether operations are in an in-house data center, an external commercial collocation data center, or in a hybrid cloud arrangement with workload split between in-house and cloud, the principles are the same. Cloud Isn’t New No matter what marketing would have us believe, […]

The entire architecture around the modern endpoint has changed. This is truly become evident within the healthcare field. Associates, doctors and administrators are all processing healthcare information in completely new ways. IT consumerization and mobility have certainly played a big part through all of this. The evolution of the healthcare environment, however, is never truly complete unless we take a look at security considerations. How has the endpoint evolved to simplify security? How are we […]

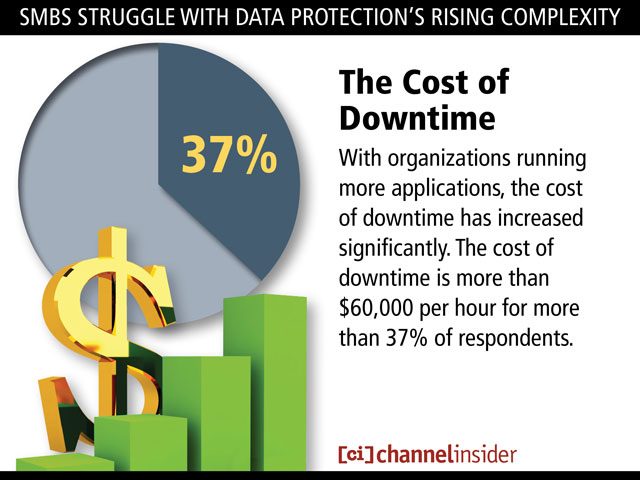

A survey of 401 global small and midsize businesses (SMBs) conducted by IDC on behalf of data protection specialist Acronis found that IT organizations are struggling with backup and recovery, thanks to the rise of virtualization and cloud computing. Backup and recovery becomes more problematic with each new platform added. Most IT organizations now routinely run multiple types of hypervisors in and out of the cloud, but these platforms are increasing the cost and complexity […]

There can be a tendency to think too simplistically about the benefits of moving applications to the cloud. Traditional logic centres around the cost savings that companies can make out of the shift from on-premise deployments but there is another, more important element of the cloud that should be given more credence – speed. The need for speed amongst enterprise organisations is critical, and, with growing understanding and competence, enterprise organisations are harnessing the potential […]

Improvements in hardware, software, and networking have combined with the secular trend toward outsourcing to usher in the era of cloud computing. The economies of scale offered by remote data centers managed by third parties allow enterprises to offload or outsource some or all of their computing and storage workloads. Cloud adoption is particularly cost-effective for smaller and midsize users that lack the capital, manpower, or expertise to build and maintain their own data centers. […]

1. Business and financial skills The intersection of business and technology is always an overarching concern, but it is especially so when it comes to cloud-based computing. 2. Technical skills With the cloud, organizations can streamline their IT resources, offloading much of day-to-day systems and application management. But that doesn’t mean IT abdicates all responsibility. There’s a need for language skills to build applications that can run quickly on the Internet. 3. Enterprise architecture and […]

The “cloudification of services” means that IT has become the backbone for every other part of the enterprise, and the department needs to evolve in order to keep up with the pace of change. That’s what Frank Slootman, president and CEO of ServiceNow, told the audience during his keynote at the platform-as-as-service IT solution provider’s Knowledge 14 conference, taking place at the Moscone Centre in San Francisco. “We really think IT needs to start thinking […]

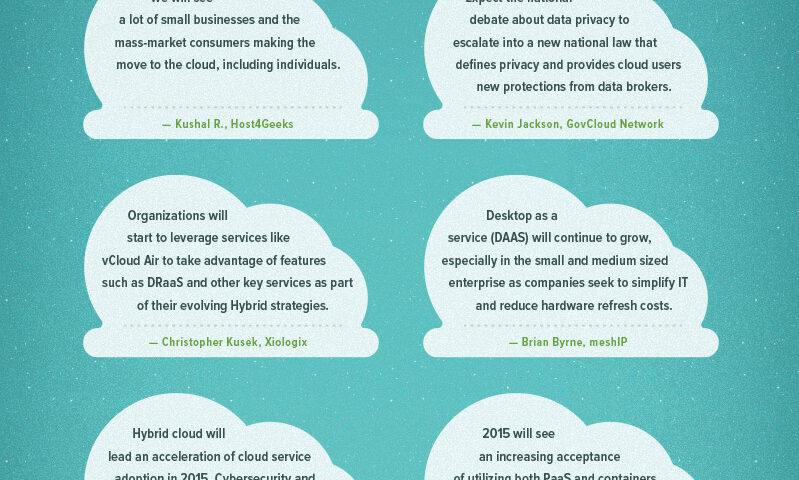

Many businesses considering moving their IT to a privately cloud based system will be looking at Desktop as a Service (DaaS), often referred to as a Hosted Desktop Service, however, many still don’t fully understand the business benefits this can bring. Cloud computing is something businesses can’t ignore as uptake accelerates around the world. In September 2012, analysts Gartner predicted that by 2016, the global cloud market will nearly double; and a paper last year […]

It’s a fact: more data is added via the Internet every second than the Internet had in its entirety twenty years ago. This accumulation of “Big Data” comes from many sources, including consumer and business files — including photos and images, spreadsheets, mobile application data, and more. In the past, much of this data was stored in the memory of computers and mobile devices. Now, data is increasingly being stored “in the Cloud,” which allows […]

Here are some key cloud computing statistics that highlight the growth and adoption trends of this strategic technology: Cloud spending By 2015, end-user spending on cloud services could be more than $180 billion By 2014, businesses in the United States will spend more than $13 billion on cloud computing and managed hosting services It is predicted the global market for cloud equipment will reach $79.1 billion by 2018 59 percent of all new spending on […]

In his latest book, titled “Architecting the Cloud: Design Decisions for Cloud Computing Service Models,” Mike Kavis, a seasoned chief technology officer and IT architect, shows how cloud proponents can map out their cloud plans. Mike identifies the six key questions that are essential to cloud architectural planning: 1) Identify the problem statement (why): “The single most important question to answer,” Kavis observes. While cloud is a no-brainer for startups, more established enterprises need […]

For a concept that seems so surreal, cloud computing is surprisingly ubiquitous. It is almost impossible to surf the internet without interacting with it in one form or another. Webmail services such as Google’s Gmail and Microsoft’s Hotmail are a well-known example of cloud computing at work, as are streaming services such as Netflix and YouTube. You’ve probably bumped into the concept at your workplace in the form of Microsoft’s Office 365 and Google Apps. […]

What’s the next big IT trend? Businesses want to know how they can leverage these trends, as well as how they can address any challenges. These trends, including the explosion of data, cloud computing and the multitude of personal devices on the corporate network, are fast changing the way organizations traditionally manage their business. The challenges and opportunities are more apparent than ever before, and look set to drive IT and business decisions in the […]